I would hope so - but simply trying at this point to provide some examples as per the request of one of the other posters. I am thinking that getting some diverse examples would allow us as a group to refine the thoughts of what this list is and what should be on it.

Thanks for the example list @matthew.strother

While it’s important to discuss a range of examples, there will be a never ending debate of what’s in/what’s out. If you ask 5 people, you’ll likely get 8 different opinions on what’s in/what’s out, let alone decide what to call it. No one person will have the answer, but as a collective of clinical leaders supported by a range of domain experts and MoH, I think we will get to some form of agreement of a consistent approach, of which many may disagree. Some of the research links below provides us with some examples of how other countries and specialties have decided to approach this issue. We can learn from these and adapt our own approach/es.

There are typically three things people cannot seem to agree on:

- completeness (false negatives) - ie. major problems aren’t listed

- clutter (false positives) ie. minor and/or inactive problems create complexity

- a common approach

Even within this group we’ll have disagreements of

- including social history/living situations in these lists

- the level of specificity (specialists typically want more detail on their specialty area and less on others eg. An Orthopod may not care about their Neuro details)

- including information provenance (data origin) because they might not trust their colleagues (junior or just differing opinion)

- including inactive problems

Here’s some good research I’ve used to try and help me understand how to approach this problem.

Summary of the below links:

Clinician opinions of problem lists are widely varied and standardisation is recommended but is difficult because of a lack of any consistent approach.

UMLS core project (2010) was a US project aiming to solve what we’re looking to achieve. Since then, there have been 52 citations of this study with the aim of solving the problem or sub-sets of this (eg. Nursing subset and Oncology subset).

https://academic.oup.com/jamia/article/17/6/675/2909110

https://orionhealth.com/nz/knowledge-hub/blogs/the-problem-with-problem-lists/

out of curiosity - why include the last item in the academic papers? Its not a primary research article, and is from a corporation with significant financial conflicts clouding any objective opinion. I ask because I have been struck moving from other research domains that HIT has a much more lax approach to declaration of COI and regards much of industry more as a partner rather than a company trying to make money. I’m genuinely curious - not ‘virtue signaling’. My clinical area, oncology, is wrestling with a culture that is academically heavily encumbered in industry relationships and is trying to sort what is acceptable, and how far the separation between industry and academia/providers must be.

Congestive heart failure - cardiac echo 22.6.18 with LVEF 25% - NYHA Class 4

Acute myocardial infarction - STEMI 19.5.18, stent to RCA and LAD

Type II Diabetes mellitus - HgbA1c 25 30.5.2019

Diabetic nephropathy - eGFR 40 30.5.2019

Stage II prostate adenocarcinoma

Gleason 3+5=7, s/p radical prostectomy 12.4.2015

Alcohol use - 12 standard drinks/day

Tobacco use - 25 cigarettes/day

Unstable housing - lives in garage

Appendectomy 1944

Tonsillectomy 1948

Hi everyone. All great conversations and I wonder how we can move forward with getting a consensus on the problem we are trying to solve first?

Do we have some problem statements at the beginning of the Wiki? Would it be good to narrow our initial scope to 1-2 use cases? I not sure I agree with the statement of a centralised repository of clinically relevant issues as I was seeing a more modern API/ query based approach. What are other’s thoughts?

To wade in on the discussion is this a master list to rule them all or a dynamic list that can be persisted at any time based on your business rules about what you want to visualize. I would strongly urge us to consider the later. In this way we can move away from debate about what one clinician wants to see versus another and we are not suggesting clinicians have to go out of their BAU systems to enter/ update data in a master list.

In order for the later to work we do need a minimum data set that each system can confirm to and modern EMR/PMS systems that can use APIs. I think it is important to remember that not all data needs to be surfaced for everyone- again business rules can help deal with this complexity.

I personally think a problem list is a view of patient’s current health issues and by simply having end date we can use the same data model for past health history as well as current.

For example consistently capturing the following would be a great start to a minimum data set.

Data of onset

Diagnosis or Procedure (SNOMED )

Stage or severity (e.g. grade of cancer)

Clinicians (Name, Role, Specialty, Professional Identifier (HPI)

End date

?Provenance (source of the diagnosis)

I would concur with above. Can you provide an example? How does what you present translate to/from the example I provided? How does the approach you propose function with a complex ecosystem of multiple clinicians with differing objectives, potentially working in different software systems in different physical locations? I agree in principle with your arguments, but need more of a concrete example to understand.

We have been thinking about lists in general. At the moment the Ministry runs the “Medical Warning System” and most of the sector either doesn’t use it or doesn’t see it as authoratative.

I think this is for a few reasons:

- The naming of the “system” creates a preconception about what it is

- Our ontology around MWS is one of level of danger associated with the warning. There are no other ontologies for categorising the information into different types (eg. Soc Hx, Fam Hx, allergies, community visiting, problems etc).

- Clinicians are not trained to blindly trust what other people do/say/write - it is more trust but verify and is based on a clinician as an autonomous unit of care (also says something about our lack of team based care and systems thinking). This leads to duplication and mistrust of what is in a system.

I’m thinking about a solution that works for us all, is broader and could encompass a “problem list”. There are some lists which we should all work on a single copy of eg. Medicines (objective fact/event based) or implants (objective fact/event based). There are also list which are subjective based on clinical reasoning or inductive logic (eg. Problems/issues) - yes ai realise some of these might be facts but consider second opinions as a refutation of an absolute position.

- Change the name of MWS to something else

- Add a category field to MWS that lets people add the type of content they are adding to the system so we can show filtered lists of different things (including problems).

- We need a way for people to “believe” what is in there. This probably means we need a self-sovereign sorting algorithm. Ie. I choose as a clinician how I want the list ordered (by some combination of category, date added, who added it ie. role/person etc). We need to be careful not to hide data - just in case. We could also consider crowd sourcing other clinicians views on things - say using a like button or a reddit style up and down vote system.

Result is that you see in this system the lists you want to see, contributed to by others but in an order that works for you.

We are currently working on some requirements for our next version of MWS will post where we are at - will include some questions we are looking for opinions on.

I agree with Anna-Marie & Jon that this list needs to be a defined in terms of a solvable problem with an extensible solution.

At its core, this is a problem where nested within one problem list is a list of problem lists. The MWS is an example. We can and need to discuss what is a minimum loveable product for a MWS list and debate if SNOMED is a way to provide structure to a list that covers more than medication allergies. So I agree with Jon that this is a worthwhile approach to this particular important problem.

Then Anna-Marie’s point kicks in. Is this MWS list, then nested within a higher order list, which in turn is nested again within a higher order? This hierarchy of nesting continues until the whole list is irrelevant to anyone but the patient, who then gives up using it because no healthcare provider does either.

This needs to a defined solution to a solvable problem within a given therapeutic contract. In discussions with clinicians at MDHB, the first order problem is about identifying the problems that are being addressed in this admission. The second wish is that this list can be communicated back easily to primary care. For our clinicians this would define the core problem they are seeking to solve.

This should be “easily” coded in a functional paradigm to create such a problem list that delivers an immediate benefit but that at a later date can become part of a nested hierarchical tree of similar lists for different owners or users, that can be traversed as need requires. It does not address the question of who is the apex owner, and I would argue this should be the patient or their whanau.

Such an approach separates the infrastructure tree, from the clinical lists or branches on the tree. Each branch can be different, providing it joins to the common trunk. The trunk can be maintained and developed independently of all branches, without stopping any branch from adding value to the clinical process or patient outcome.

Really interesting thread with no easy answer. I feel this conversation is becoming complex and the problem definition is becoming murky based on the original scope. I feel the scope definition needs some wider clinical user research, beyond this passionate thread, before we stumble any further on our own assumptions of what we think this is/isn’t.

Regarding the problem definition, as it is listed at the beginning of the thread, it largely discusses the overarching functional requirements rather than the detail ‘content’ of what conditions should be an option or not. I think we need to come back to the scope of the problem because I think it is somewhat confusing, at least for me. It seems to me that many contributors to this thread seem to be more interested in defining the content within the list than the overarching scope/purpose of the list.

Another point I noticed after re-reading the scope, was that it excluded allergies/adverse reactions. Based on that scope definition, I had assumed that the MWS (in its current form) was out of scope. Following @jon_herries mention of MWS, I did some research and found the current definition of the MWS:

“Is an alert service…[which]…warns health care providers of known risk factors that could be important when making clinical decisions about patient care. This includes drug allergies or medical conditions.”

That last part is key to this discussion because the current scope of the MWS is technically meant to be solving part of our problem, but it isn’t working well for us right now. I personally would prefer we looked beyond this small, highly engaged, community and put it to the masses. This is where I feel that MoH and HISO could properly fund some user research/surveys to clinical groups AND implementors to understand why they don’t use/integrate with MWS. Is it that they don’t trust their colleagues and/or the source systems? While I’m glad to hear that MoH are looking at re-thinking ‘lists’ and the MWS itself, I feel there should be more research and consultation with clinical users (beyond this interest group) before conjuring up requirements of a solution which then is perceived to have been forced onto the clinical user and implementer communities.

Another comment would be that the MWS, based on the 2003 MWS data dictionary, is a significant part of what we’re talking about except requiring clearer definition of scope and more modern, web based interfaces to solving this problem.

@matthew.strother, to your point earlier in the thread on those research articles, good point. And you’re right, it’s not a research article or academic paper by any means. You’ll see that I was the author of the blog which was an opinion piece being used as a marketing tool. At the time I was at Orion, I had done some research and was asked by Orion to author a blog, which is attached. I wrote the blog to emphasise, to their prospective customers, that it’s not easy and requires a large amount of effort, and there’s no single ‘right way’ to approach this issue. I wasn’t endorsing anyones products, I was attempting to demonstrate an alternative industry perspective who require an understanding what it means and how to implement it consistently if it is to be adopted and successful at improving patient outcomes and reducing patient risk.

Thanks - just curious.

I would agree with @michael.hosking - I think it sounds as though refinement of the MWS would have high overlap with this discussion.

I also agree, this conversation is getting very complicated very quickly.

How does this type of work move forward - I think it heavily relates to ?MoH? vision of shared information platforms and minimum datasets. Is the next step identifying champions in appropriate organizations to establish a workshop format?

All,

Some interesting points raised so far. In terms or what we need to do moving forward though we probably need to try to summarise things. Here are some of the points i have picked up within this wiki and in the discussion prior to the wiki set up.

-

The origin of this discussion stemmed from work to look at defining the requirements of a problem list for use within secondary care. From discussion so far I would agree that should be the focus @amscroggins made some good points about the potential for this to be a local list to start off with is a good idea.

-

As highlighted @KarenDay highlighted problem lists do pose a wicked problem, they mean different things to different people and without curation you cannot rely on them being up to date. As @matthew.strother pointed currating these and keeping them accurate would take time and skill to do right especially if access to the list is just limited to a few curators

-

As clinicians i believe that we should be able to apply our clinical reasoning to come up with a list that is relevant to the care we are providing. The significance of some issues may not be applicable to all and this is why I feel that the concept of the Ongoing Clinical Conditions under Active Management (OCCAM’s) List could be really useful here. If applied well it could solve the curation issue, and promote interdisciplinary practice. However it may need a filter on it, so you could see all or maybe just those items relevant to you. Looking at the screen shot in the recent article in ehealth news It looks like WDHB have developed a similar filter in their electronic clinical notes. But the source of the problem list in the screen shot in the article appears to remain the same. @lara are they the same list?

-

@alastairk showed how awesome SNOMED CT can be and if we could have these lists done in a way where there was the potential to use NLP to markup your list items to snomed codes, rather than confining it to a refset or pick list that would be great

-

@david.hay had mentioned that the iIPS may be a good starting point and I see that there are are alreadyFHIR resources for lists and this may be something for the MWS to utilise

-

@jon_herries raises some good points about the potential of something similar to the “Medical warning System”. However a system like this is essential to ensure allergies are not missed and I am sure some DHB’s in the country do use this and do send allergy alert information when captured. But if

then addressing this should definitely be the first priority. I also agree with @michael.hosking that any work to refine MWS would need wider clinical consultation with users beyond this interest group.

Finally echoing @matthew.strother comment what do we do next?

- Are there any examples of electronic lists working well here in NZ that we can draw from

- Is there a time frame for when work on sorting out the MWS nationally will start happening, should we wait for this or carry on deliberations trying to find an interim solution

- Can we start crafting an agenda for workshops and refine things further so we can overcome intertia and get some runs on the board

Hey All,

Great comments and thoughts!

I want to post the question - is there actually a value for having a discussion at HiNZ on this topic? I don’t think there is a lot of value yet.

As has been recognised a few times, the initial impetuous for this discussion was me seeking advice on implementation of a problems list for the reasonably restricted use cases of facilitating inter-team handover and ward rounding. The question of a national list grew from that initial post somewhat organically.

I have now spent quite some time reading and thinking about this problem, including the erudite authors in this post… I think we can all see the challenges and problems, and the fact that is a “wicked problem” has been stated several times.

i.e. I think this is the sort of thing which would be best attempted after we have successfully implemented more modest products at the national level, or as the expansion of a successful regional product.

I’m happy to try and tee up some time at HiNZ/during the CiLN meeting, but from the above discussions, I’m not at all confident that we will come up with anything concrete, and even if we do, the way forwards to implementation is entirely unclear (to me). It may just be an exercise of talk for talk’s sake.

Cheers

Mat

BTW - We will continue to attempt to try and get a problems list working for hospitals in our region… I’ll let folks know how we go, but don’t hold your breath!

No problem. Just a wee bit busy at the moment but will work this up for you?

I believe a “problem list” is more a “stuff about me” list.

It should exist as a single source of truth in a central place and all HCP’s involved in care of a patient should have FHIR API based access to it when they are using their clinical record. Thus it would be a MLOM model. Of course it should be standards based (SNOMED CT , read etc) and curation should be by any HCP using it. As each “list” was curated the previous list should remain available for historic access. In a perfect world the patient should have access. Its use is clear…it is a prompt for Silo’d users to remember the important key things about the patient. To this end it would have allergies and sensitivities, social history, disease lists, sexual orientation, smoking history, and anything else thought important to the HCP.

The way it would work is that any HCP clicking a “problem list” in his / her clinical application would draw down the list and make modifications, additions etc and when moving away the updated list would be available for the next HCP involver in the patient care.

Just saying…this as a concept is easy butthe devil is in the detail

I agree. This is then patient-centric which surely is our aim, isn’t it?

To provide some additional reading to this discussion which may provoke ideas, there are international examples that we can use as a baseline. That is if we’re simply discussing a set of SNOMED CT Concept Codes that could be considered as options for a user to choose in a problem list. (This is only part of the wider conversation we’ve been having to date)

Regarding Standards Development and/or creation (and maintenance), best practice suggests that we should beg, borrow and steal from other jurisdictions and adapt to our own needs rather than starting from scratch. Further to this, we need a robust process to maintain these subsets because they evolve just as the medical and healthcare professions evolve.

This, in itself, has it’s challenges as SNOMED CT concept codes that exists in the below subsets will likely contain their corresponding national extensions (eg. Canadian Extension) which does not necessarily translate to an equivalent NZ code here (this may require a SNOMED Author to create/map localised versions of the same/similar concept).

Some of the available options for us to review from an international standpoint:

Australia:

- Problem/diagnosis reference set (available for download from below link, with an account)

- National Clinical Terminology Service (NCTS) - responsible for managing, developing and distributing national clinical terminologies and related tools and services to support the digital health requirements of the Australian healthcare community

https://www.healthterminologies.gov.au/access

US:

- UMLS SNOMED Core Problem List

CORE Problem List Subset includes SNOMED CT concepts and codes that can be used for the problem list, discharge diagnoses, or reason of encounter

https://www.nlm.nih.gov/research/umls/Snomed/core_subset.html - VSAC - Value Set Authority Centre - a repository and authoring tool for public value sets created by external programs

https://vsac.nlm.nih.gov/

Canada

- Terminology Gateway: “Health Concern Code Subset Commonly Used” (Domain - primary healthcare)

Represents the ‘Most Commonly Used’ clinical problems, conditions, diagnoses, symptoms, findings and complaints, as interpreted by the provider

https://tgateway.infoway-inforoute.ca/html/

UK:

- NHS Data Dictionary - contains specific subsets based on clinical specialty eg. Maternity Services

https://www.datadictionary.nhs.uk/data_dictionary/messages/clinical_data_sets/clinical_data_sets_introduction.asp?shownav=1 - TRUD - SNOMED CT human-readable subset - Diagnosis

https://isd.digital.nhs.uk/trud3/user/guest/group/0/pack/40/subpack/289/releases

Be weary, some of these services have their limitations.

eg. TRUD: https://medium.com/@marcus_baw/why-the-trud-must-die-ac27377ee189

These are just some international exemplars which host comprehensive data release services which NZ is currently under-resourced to provide. Our equivalent is MoH’s HISO which operates on a shoestring, nor does it have any services for clients to consume (eg. APIs)

I’ve been looking through the discussion from 2019 on this forum regarding problem lists. As a result of a search on the UK section of Discourse, I’ve come across another resource which relates to this discussion which I’m pasting here.

Kia ora koutou . . . entering from rural GP land ![]()

This is the first mention of ‘patient-centered’ in the thread, so thank you @Carey . Any requirements for any part of a patient summary template must link in to the ‘Patients needs and goals’, as defined by the patient/whānau.

Therefore, from inception, how the list feeds into patient portals, and how patients/whānau engage with this list, needs consideration. It also speaks to using coding to ensure the language is as simple as possible, while alerting any health provider to the diagnostic issue.

Having design built around patient-centered, rather then system-center, hasn’t really happened yet . . . how can this particular piece of work re-orient the design thinking to being about the patient/whānau’s perspective (e.g., their needs/goals)?

Somehow, any system must ensure that at creation of the list, and certainly as it’s being used, there must be direct engagement with the person who’s list it is. I appreciate this is about use, rules-of-use, and user interface . . . but, it’s so crucial that I’m curious how this can feature in discussions on creating the list itself.

Our new health reforms are about trying to break down silo aspects of the system . . . if this process isn’t thinking about primary care, too, there will be further problems as primary vs secondary is meaningless to patients who transition across services regularly.

This is an opportunity to bridge transitions, so how will primary care lists be considered in this conversation?

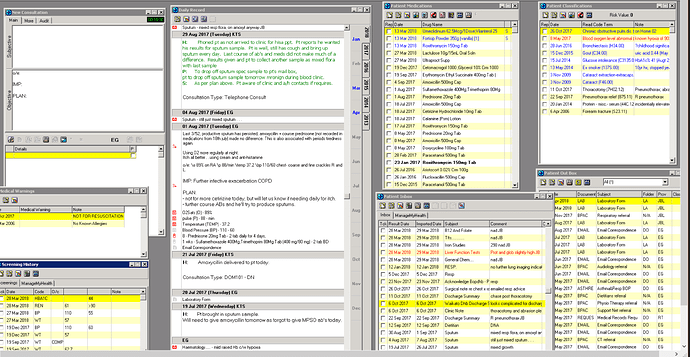

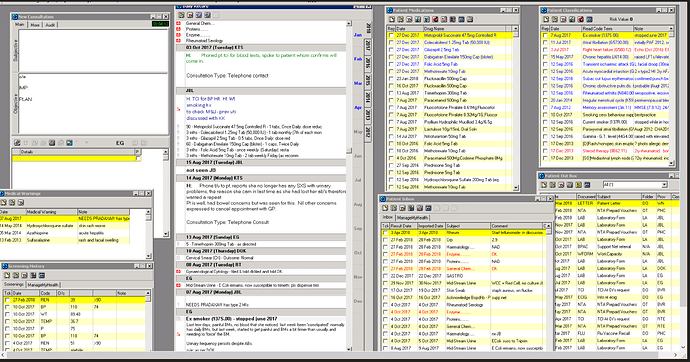

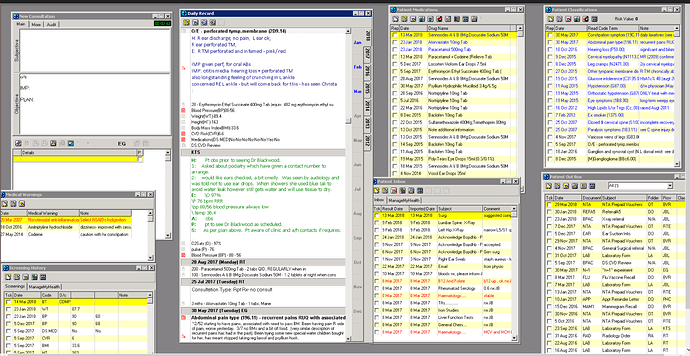

To keep things real, and to demonstrate we need to focus on how this process will improve health for those with the most needs, here are de-identified snap shots of how real health data looks (in our NZ-grown MedTech ![]() )

)

The ‘Classification’ is the equivalent of the ‘Problem List’ being discussed. What should be in the ‘problem list’, needs to be a clinical decision in alignment to patient needs/goals. Therefore, whether medication allergies are included (or not), should not be predetermined (e.g, not excluded, but not mandatory). Also, character-limited free-text is crucial to contextualize diagnosis to particular patient (e.g., please see ‘note’ next to classifications in snapshots → character limitation is great as forces clinicians to really focus on what is crucial to convey. Saw a junior simply copy and past a radiology report next to a diagnosis in a free-text ‘problem list’! This must not happen ![]() ).

).

Notably, the current EHRs are NOT centered around patient/whānau, so the patient needs/goals is currently NOT well captured or documented. Though much can be improved (namely interoperability), MT32 still is great for clinical flow. Please note the use of colors (e.g., classifications) to denote what’s current/active, really important, historical)

PS- That’s an excellent summary and great place to start ![]() Patients needs/goals not featured, but really good approach. I note a GP was part of the advisory group who produced this document, and there was clear description of the Problem List across different settings. Thanks for sharing!!!

Patients needs/goals not featured, but really good approach. I note a GP was part of the advisory group who produced this document, and there was clear description of the Problem List across different settings. Thanks for sharing!!!