Hi @rradecki

I’ve been playing a bit with Chat GPT. I also have a few thoughts on that dog.

The dog

I think there is one thing worse than dissing your vet on Twitter, and that is enthusiastically embracing a new and powerful technology based on anecdote, especially n=1.

I can see similarities to the patient who comes to clinic clutching a bucketful of information they’ve gleaned from the web. This can provide very valuable information. But quite often the bucket is full of red herrings. This should also be distinguished from the patient with an identified, rare, chronic condition—where quite often they are more knowledgeable about their disease than the average doctor, and sometimes even more so than an expert in the field. I see the role of the clinician here as providing much-needed pathophysiological insight and a therapeutic context, which the patient may still require to complete their picture.

I find it interesting how Chat GPT embraced the idea that you treat babesiosis with an ‘antidote’, too.

The problems

Just today there were several articles on this topic in the New England Journal of Medicine, notably this one: https://www.nejm.org/doi/full/10.1056/NEJMsr2214184. They seem to have missed a lot of the important issues.

The obvious issues with Chat GPT are its glib ability to fill in areas of lack of knowledge with what has been termed ‘hallucinations’, and the fact that it can pretty much only work on the corpus of knowledge it’s been supplied with.

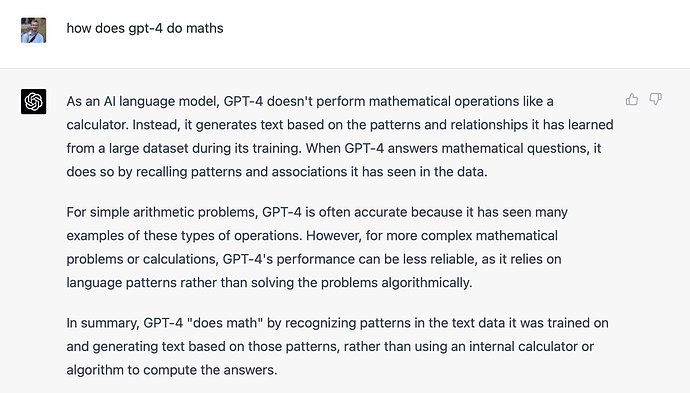

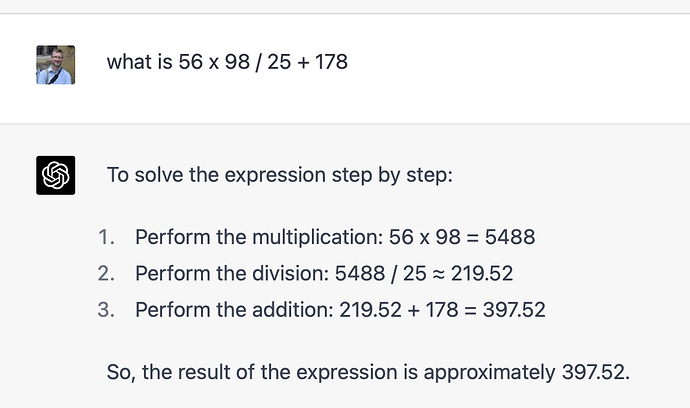

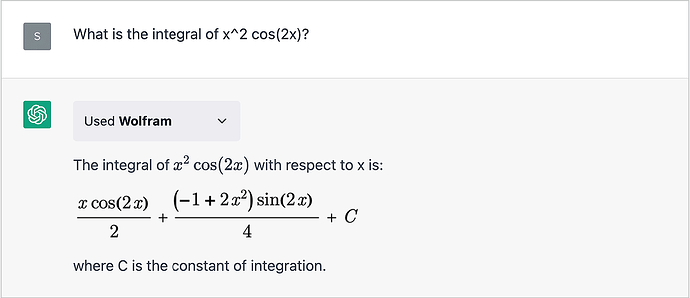

A much bigger issue is that—as you hint—it has no internal model whatsoever. It doesn’t ‘get’ meta-cognition or counterfactuals. It is ‘merely’ dealing with word association. As you pointed out, it is crap at maths, but it also cannot test its internal model against reality, because it doesn’t have one.

Playing with Chat GPT

It is fun to play with. A lot of fun. For example, with adequate specifications, it can compose a non-trivial program written in say Perl that runs perfectly, first time.

It can also be infuriatingly stupid, not just owing to its limited, biased knowledge base (women and minorities get short shrift) but also because it lacks metacognition or any underlying model of the process under discussion. Let’s try an example

Me: Can you state Ohm’s law and its cardiovascular analogy?

Chat GPT: Ohm’s law states that the current flowing through a conductor is directly proportional to the voltage applied across it, and inversely proportional to the resistance of the conductor. It can be expressed mathematically as:

I = V/R

where I is the current flowing through the conductor, V is the voltage applied across it, and R is the resistance of the conductor.

In the cardiovascular system, a similar analogy can be made by comparing blood flow (I) to electrical current, blood pressure (V) to voltage, and vascular resistance (R) to electrical resistance. Therefore, the analogy of Ohm’s law for the cardiovascular system can be expressed as:

Blood flow = Blood pressure / Vascular resistance

This was followed by an adequate description of the analogy. But then I asked…

Me: Can you use that second equation to explain why blood pressure might drop in a patient with vasodilated septic shock?

Chat GPT: Yes, the equation Blood flow = Blood pressure / Vascular resistance can be used to explain why blood pressure might drop in a patient with vasodilated septic shock.

Septic shock … [blah, blah]

In other words, the decrease in vascular resistance (R) in septic shock leads to an increase in blood flow (I), which must be compensated for by an increase in blood pressure (V) …

Chat GPT was subsequently profusely apologetic that it had things back-to-front, but still didn’t seem to ‘get’ the model it had just described. So I then asked it to rearrange the equation, making Blood pressure the dependent variable. Which it did. Now check this out …

Me: Teleologically speaking, which of the two variants of the equation makes more sense in terms of the ‘objectives’ of cardiovascular homeostasis?

Chat GPT: Both variants of the equation, Blood flow = Blood pressure / Vascular resistance and Blood pressure = Blood flow x Vascular resistance, are important for understanding cardiovascular homeostasis and the regulation of blood flow and pressure in the body.

However, from a teleological perspective, the second variant of the equation, Blood pressure = Blood flow x Vascular resistance, may make more sense in terms of the objectives of cardiovascular homeostasis. This is because blood pressure is a critical parameter that needs to be regulated to maintain adequate perfusion to the body’s organs and tissues.

In the cardiovascular system, blood pressure is regulated by a complex interplay of factors, including cardiac output, vascular resistance, and hormonal and neural regulation. By expressing blood pressure as a dependent variable that is influenced by changes in blood flow and vascular resistance, the equation highlights the importance of maintaining an appropriate balance between these factors to achieve optimal cardiovascular function.

In other words, the equation emphasizes the idea that changes in blood flow or vascular resistance can have a significant impact on blood pressure, and that the body needs to adapt and regulate these factors to maintain adequate perfusion to the tissues. This is an essential aspect of cardiovascular homeostasis, as changes in blood pressure can have serious consequences for organ function and overall health.

Overall, while both variants of the equation are important for understanding the cardiovascular system, the second variant may be more relevant from a teleological perspective, as it highlights the central importance of blood pressure regulation in achieving cardiovascular homeostasis.

This is so impressive that you might be forgiven for forgetting that all of this is simply pattern matching. I won’t bore you with my subsequent enquiry into what affects blood flow (assuming SVR is fixed) but this amply confirmed what we already know about pattern matching—but no model!

Other issues

There are even bigger issues. One is that re-training these bots is non-trivial, so the tendency is to cast the oracle in stone. It will not adapt to changing knowledge or circumstances until the next expensive iteration—which will no doubt be dictated by economic factors. GPT-3 cost at least US$ 4.6 million to train (with a huge carbon footprint). GPT-4 likely a lot more.

A second issue is how inscrutable this all is. Chat GPT is triply inscrutable because not only don’t we know what went into the mix (the data are secret), or how this was done (the source code is a secret), but we also have the usual issue of the ‘explanation’ for a recommended course of action depending on nothing other than a matrix of coefficients—that cannot be explained. (Even its ‘explanations’ are more of the same!)

A third issue pertains more to societies where legalism prevails (like the US) because you can be sure it’s only a matter of time before Chat GPT is invoked (if not put on the witness stand!) to explain what a stupid decision the hard-working doctor made in the middle of the night, looking after a dozen sick people.

The consequence will surely be that “just to be sure” more and more clinicians—especially younger ones—will ask Chat GPT, who will recommend a huge bunch of extra investigations, increasing not just costs, but also the VOMIT syndrome (Victim Of Medical Imaging Technology) where ‘incidentalomas’ result in not just extra tests, but harm related to those extra tests.

But just yesterday I gave my house officer ‘homework’ and he seemed more than happy to do this simply by asking Chat GPT. (I was less than happy).

You should also note that the approach taken by Chat GPT is provably sub-optimal, because it does not use Bayesian logic in attacking problems.

The BIG issue

In my opinion however, the really big issue relates to whether we can learn to leverage the smarts of this new technology without allowing ourselves to become dumb. If our modelling and diagnostic abilities atrophy because of the convincing spiel of the chatbot, we will become poorer and medical innovation and improvement will be terminally stifled.

My 2c, Dr Jo.