I asked ChatGPT to write my HINZ presentation for me on HCI predictive modelling. I think it did a pretty good job:

That’s outstanding. I was asked to present on the difference between Service Design and Lean Six Sigma, I thought ChatGPT might be up to the task!

Funny - I asked Stable Diffusion to give me some pictures of how we can solve problems in the health system:

It seems all of our problems can be solved by a combination of white coats, stethoscopes and undecipherable symbols.

Jon

Looks like it has invented a new version of the stethoscope too with various extra bells and tubes. This confirms my suspicion that all that amazing AI artwork was the AI cobbling together existing artwork (done by humans!) in its database… I suspect the essays are also largely patched together existing essays (written by humans) too. Very convincing though… (the essays - the stock photos not so much).

I was able to get ChatGPT to write an article for me with sources:

Interesting - it looks like it might have made up the URLs of the sources? I wonder if it invented the quotes as well?

Oh wow, that’s what I get for not checking the sources! I have updated the article

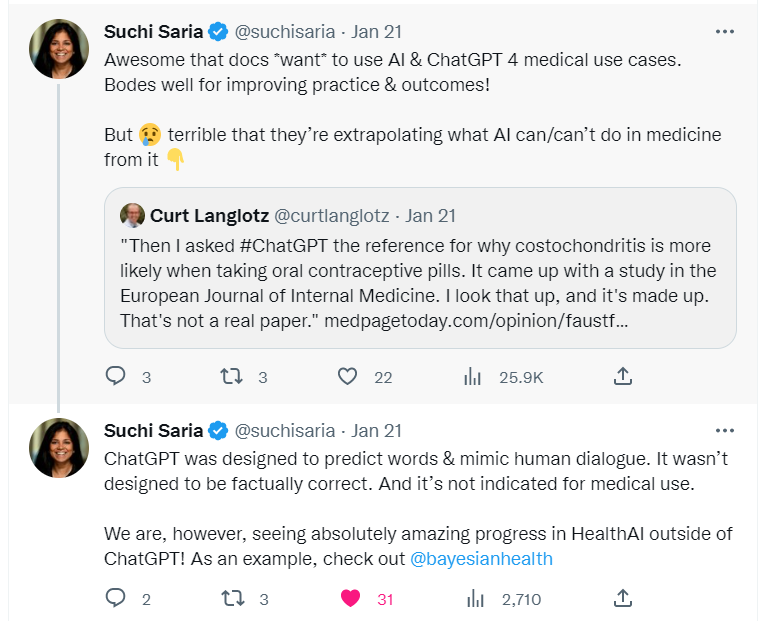

It seems chat GPT does not have any inhibitions about making things up. This is another example, I’ve copied from a post on the UK Open Forum discussion board:

If you have access to the UK Open Forum one you can read the discussion on chat GPT there:

https://discourse.digitalhealth.net/t/potential-uses-of-chatgpt-in-the-nhs/28024/10

@Pera_Barrett has also shared here on eHealth Forum his experience with AI making things up when he asked it to provide a summary of a short story. The way it made up a new ending to the story reflects AI’s tendancy to follow societal biases rather than always sticking to the facts.

And not so much ChatGPT as it relates to health and health sciences, but I think some of Nick Cave’s thoughts could be extrapolated to human interactions - be it clinical or with colleagues such as at a meeting:

Sounds like Nick is worried…

ChatGPT works by predicting which next word in a sentence sounds most human according to feedback it has had on previous sentences from trainers. I think the people who were paid to train it just told it whether the sentence seemed believable or not rather than checking URLs and whether or not the facts were correct. This produces a nice demo but below the surface will cause problems - probably why Google have not implemented it for search.

It doesn’t seem to have so many problems with code because they also paid computer scientists to give it feedback so could predict not just what looked like it might be right but it was actually right.

I think it will fairly rapidly improve - I have seen some references now that are correct - i.e. the URLs are real and the articles do actually contain the information it cites. It’s also now improving its maths etc. It really depends on who OpenAI get to do the training. I suspect they might leave it up to the users which could spiral into something very strange.

At the moment, using it as a healthcare chatbot could be very unreliable and potentially dangerous.