Now - nearly June 2024 - are more GP’s and other clinics using Nabla? or is there anything better?

Hi @Andrea see this article in eHealthNews and eHealthTalk NZ podcast episode 42 with @karl and @richard.medlicott https://www.hinz.org.nz/page/PodcastEpisodes wherever you get your podcasts.

Current estimates are around 400 GPs are trialling it and I know a number of PHOs and the RNZCGPs are working on guidance around use of these tools.

Thank you! great to know there is a study on the use of it. hope to hear more soon!

Andrea

Kia Ora. Any more developments from Primary Care on this?

A wee update on the situation in secondary care from the most recent D&D Pānui:

Health NZ tests AI for clinical notes

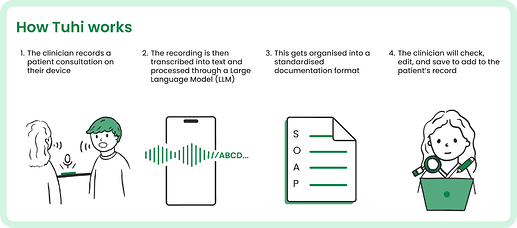

Health NZ is testing AI-driven clinical documentation to alleviate the burden on clinicians. Our New Technology & Innovation team, in collaboration with Awa Digital, has created an app named Tuhi, currently being piloted by 15 clinicians. The app utilises large language models (LLMs) to transcribe voice into text and transform these into comprehensive clinical notes. This technology aims to lessen cognitive load and enhance clarity in clinical reasoning. Te Whatu Ora handled 75.5 million outpatient and community visits in the first quarter of the year, and using this at scale and saving just one minute per visit translates to potential cost savings of $50 million.However, challenges like data security, affirmation bias, and the need for models to handle mixed languages and accents, including local ones like Te Reo and tikanga, persist. We are addressing these issues with up to 50 beta users at Wellington Hospital. A formal evaluation will also assess the Tuhi app’s acceptability and experience to enhance healthcare delivery efficiency and effectiveness.

This initiative marks a major advance in leveraging AI to enrich healthcare delivery. Te Whatu Ora is dedicated to investigating and deploying these technologies to enhance the efficiency and efficacy of clinical documentation.

For more information about Tuhi contact @jon_herries , New Technology and Innovation

Does Tuhi anonymise the data before uploading to the LLM? If so, how is this done? It is something I have been confused about with commercial AI scribes - I’m not sure how this could be done reliably without using a LLM to do it in the first place! It would be good to see some data on how reliable anonymisation is.

My feeling is that we should wait for reliable on-device LLMs before using AI scribes with confidential patient data. I don’t think this is very far off although on-device models will probably be smaller and more prone to hallucinations. If I was a patient I would probably prefer more hallucinations (if the note can be properly checked by me and my clinician) than having my data sent to a cloud-based LLM.

Interesting JAMA article on AI scribes - this section piqued my interest:

“Third, even summaries that appear generally accurate could include small errors with important clinical influence. These errors are less like full-blown hallucinations than mental glitches, but they could induce faulty decision-making when they complete a clinical narrative or mental heuristic. For example, a chest radiography report noted indications of chills and nonproductive cough, but our LLM summary added “fever” (Figure, C). Including “fever,” although a 1-word mistake, completes an illness script that could lead a physician toward a pneumonia diagnosis and initiation of antibiotics when they might not have reached that conclusion otherwise.”

I also like this related/unrelated JAMA commentary for its disdain for the concept of “vigilance” in clinical proofreading – but I would say its some of its 5 proposed potential solutions are bordering on dystopian …

I suspect the answer to this is to take an empircal approach - we don’t really know how problematic AI is going to be so we should design studies that assess whether or not AI systems reduce errors and improve efficiency in real world use. I suspect that they probably do improve things at the moment (i.e. automated summaries are better than human-written ones, even with some hallucinations) and will greatly improve things in the near future due to the rapid advance of the technology. That said, I can see scenarios where the use of AI could cause serious harm at quite a large scale (LLM-based health chatbots for example) so we need to be very careful going forwards.

No - we are using a private instance.

Jon

Hopefully you are using a model with better performance on the hallucination front than Open AI’s Whisper:

Here’s one of the studies referred to in that article:

I personally think that hallucination is going to become a non-issue fairly soon. There are fairly obvious technical solutions to hallucinations but they cost money in terms of compute (e.g. RAG, chain of thought, etc.). LLM services seem to prefer just to say ‘don’t use for medical purposes’ rather than offer solutions that don’t hallucinate but that cost more to run.

I’m hopeful that Apple will build in on-device LLMs that do accurate transcription and summarisation securely as part of Apple Intelligence. They seemt to be delaying release at the moment though as probably not good enough in internal tests for them yet.

I think a discussion about clinical use of AI should include some thoughts on teaching clinical reasoning… so just putting my 2 cents in here ![]() at the risk of being too theoretical…

at the risk of being too theoretical…

One book I have is “Teaching Clinical Reasoning” by Robert Trowbridge et al.

At least this should help in developing clinical decision support if that’s a priority for anyone… not sure about AI…

I am literally just finishing up an assignment for bioethics paper for Digital Health postgrad on on ambient AI scribes, and whether the promised benefits of lessening the effects of burnout on (ambulatory care) clinicians and subsequently patients is worth the potential risks.

There is a large amount of mainly US-based (per Meaningful Use mandates) journal articles on this topic.

If I had ignored eHealthnews.nz updates I wouldnt have had to keep re-writing it ![]()

ooh this sounds fascinating! ![]()

I think the expectation that it would be “100% right” hits the mark but misses the point for a few reasons:

- The typist doesn’t always get it right when you dictate a letter.

- Sometimes humans mis-diagnose patients when we have the right information in front of us

- Sometimes we mishear patients or forget to write down what we heard or write down the wrong thing.

Biggest take away if you are trying these tools is, don’t succumb to automation bias (only a somewhat Sisyphean task). They will do 80% of the job not 100%. You need to review, edit and confirm that they are right.

I think in that context they provide a lot of value even if they only do 80% of the job (@Ruth_Large posted some great reasons on LinkedIn).

Just to stir the pot - perhaps if they did 100% you could have robots be clinicians?

Well, it has been radio silence from Health NZ about whatever happened to Tuhi (which sounded like a genuinely good idea).

At least the press is asking questions: